Summary: What is Service Mesh and why it matters?

A Service Mesh is an addressable infrastructure layer for service-to-service communication, abstracts away the network complexity, and other challenges from your application. Meaning It allows you to separate application from networking so that individual application [Microservice/ Container/VM Workloads/Noncontainerized/Nonmicroservice workloads] doesn’t necessarily have to be aware of the network and Service Mesh can be implemented without having to change a single line of your application code.

Bottomline: Decouples development from operations.

In this article, I will walk you through on Service mesh and some of the key capabilities on how it can help with your microservices network.

Why service mesh required in Distributed World?

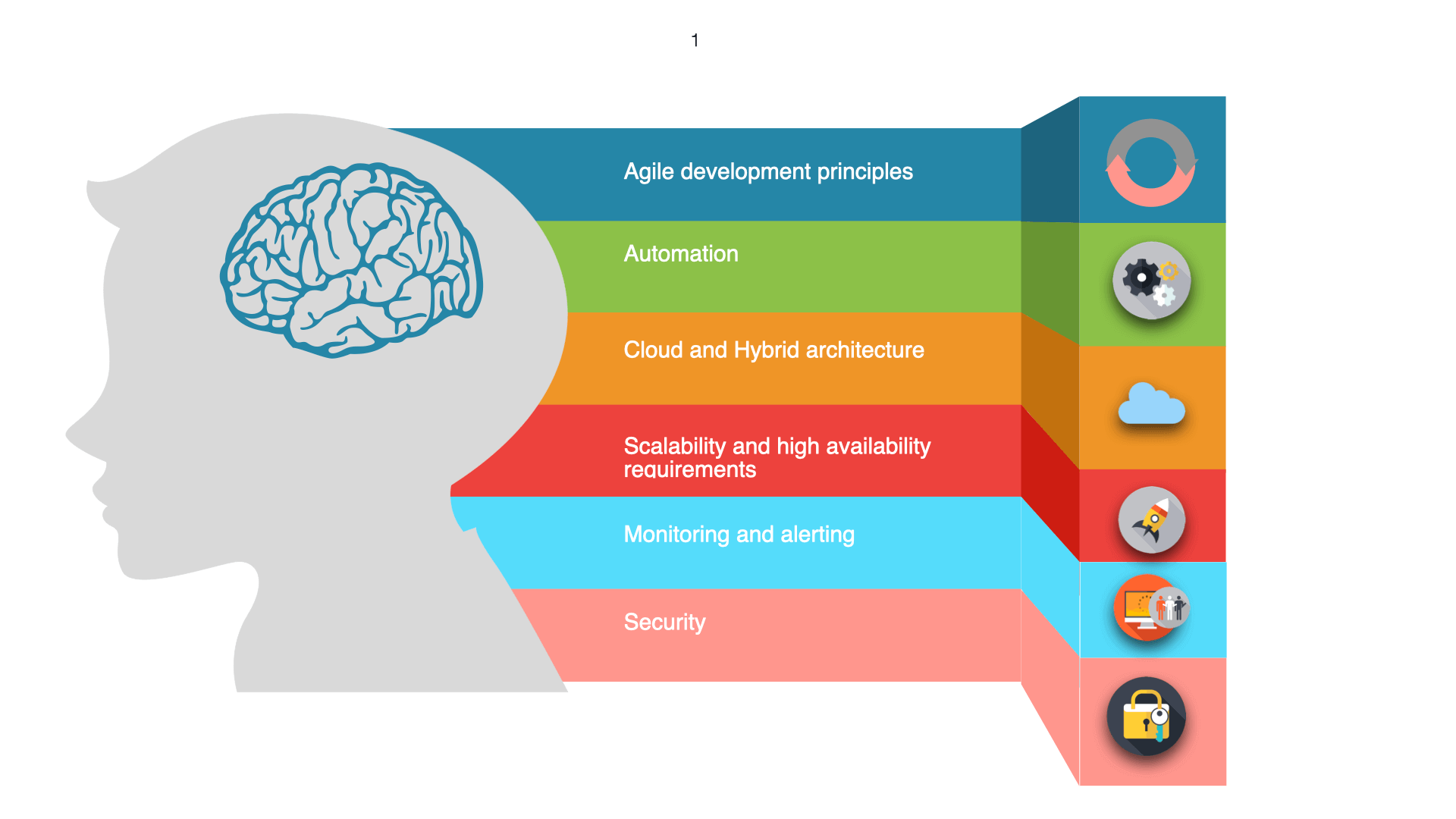

Today’s world customer focus is Multi-cloud, Public cloud, Private Cloud, and Hybrid cloud. Customer expectation is to get High availability, resilience, scalability, and manageability and with all these options customers want to get the best breed together in different combinations of the cloud because they cannot move all the services to the cloud due to various other business/product limitations and reasons. The nearest solution would be implementing your own custom solutions or paid solution or you can go for any open platform to get your needs sufficed.

In the recent era, microservice acceptance has gained popularity, for many companies it has been the de facto standard for creating enterprise services.

Since 2017, Kubernetes has risen and has played a key role within the cloud-native computing community.

Since the world is moving around loosely coupled microservices architecture which offers various benefits like better reuse, better scalability, better agility, better scalability, and better manageability. But we will have challenges on how we are going to deploy, secure, connect, manage, and control the microservices seamlessly and companies who already adopted microservices understood that a dedicated software layer is required for managing the service to service communication.

In that case, we have to use a better solution to create microservices uniformly with security, resilience, and observability enabled for microservices. Say If you take a simple use case in service to service communication request should be able to handle Encryption, Metrics, Access Control, Network timeouts, Metrics, and so on. This is where a Service Mesh comes into existence.

What capabilities does a service mesh provide

A service mesh offers a configurable infrastructure layer for service-to-service communication, abstracting away the network complexity and challenges. It makes communication between services instances flexible, reliable, and faster. Meaning that, Say your workloads can be on Cloud premises or On-prem or Virtual machine or Container services it assumes things are heterogeneous and it gives unifying abstraction to services and obviously manages them at scale and reduces complexity with the business logic in the application side.

The main goal is to get Visibility on what you have deployed and apart from that it has some more capabilities highlighted below

- Service discovery: This capability allows the different services to “discover” each other when needed. The Kubernetes framework keeps a list of instances that are healthy and ready to receive the request.

- Load balancing: In Service mesh, the load balancing capabilities will place the least busy instance on the top of the stack, so that more busy instance will get the greatest amount of service without starving the least busy instance needs.

- Encryption: Instead of having each of the services provide their own encryption/decryption, the service mesh can encrypt and decrypt request and response instead.

- Authentication and Authorization: the service mesh can validate requests before they are sent to the service instances.

- Circuit breaker: No need to implement the circuit breaker externally to the application it will take care internally by service mesh.

- and much more …

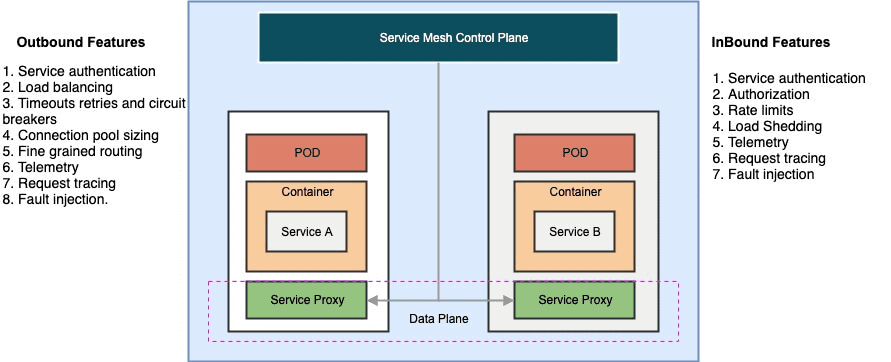

Service mesh Inbound and Outbound features

How does a service mesh work? What is a sidecar proxy?

The service mesh is generally implemented by providing an envoy proxy instance, called a sidecar. Sidecar container is nothing but another container which is deployed manually or automatically attached to each microservice service that you have developed and deployed.

It basically handles all the traffic which comes in/out of microservices and as a result, it drives better visibility, resilience, traffic control, security, and observability and that is the actual value add of a service mesh. Also to eliminate the potential for a network-based attack, the sidecar has the same privileges as the application to which it is attached.

What is an envoy proxy?

The default service proxy for Istio is based on Envoy Proxy. It is nothing but a Network proxy to intercept communication and apply policies.

One of the most popular sidecar proxies in today’s trend is Envoy. It was built at LYFT, it is a high-performance proxy written in c++ which is using a protocol called XDS v2 between the control plane and Envoy proxy. So it is not mandatory to use Envoy, we can use any proxy which supports this XDS protocol. We can use the envoy which ISTIO provides or Envoy on your own or any other envoy proxy that is compliant with the XDS v2 protocol.

Envoy Proxy Architecture: Envoy broke its threading model into three categories

Main

The main thread coordinates all the most critical process functionality. They have one main thread or multiple main threads that monitor all of the other threads and make sure the transmission of the data from one place to another is being handled properly.

Worker

Each worker thread spawned process all IO for all the connections it accepts for the lifetime of the connection. It actually do the heavy lifting of io going back and forth between containers and finally when you don’t need a thread any more now or their memory that it is allocating they have a file flusher that intercedes just like the garbage collector for example on java or something of that nature that lowers the amount of demand on the physical servers that you are dealing with and provides really fast performance.

File Flusher

The worker writes to files are buffered to memory and then physically written by the file flush process thread.

Some of the features in Envoy proxies include:

- Dynamic service discovery

- Load balancing

- TLS termination

- Traffic routing and configuration.

- HTTP/2 and gRPC proxies

- Circuit Breakers

- Health checks

- Staged rollouts with %-based traffic split

- Fault injection

- Rich metrics

- Network resiliency: setup retries, failovers, circuit breakers, and fault injection

- Security & authentication: security policies, access control, and rate-limiting

What is Istio? / Why do we need Istio?

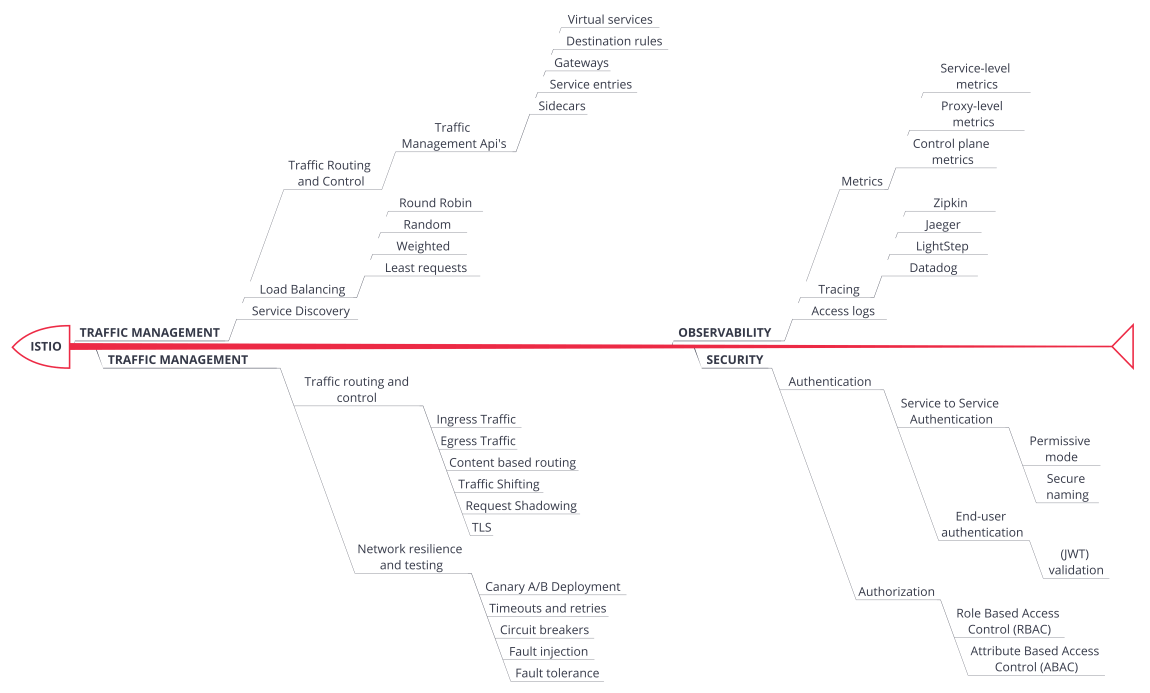

Istio is one of the popular service mesh technologies. It is an open service platform to manage inbound/outbound service interaction across containers, make it easier to connect, manage and secure traffic between, and obtain telemetry about microservices running in containers and VM based workloads efficiently. It is a configurable infrastructure layer that sits on the L7 network assuming L3/L4 is already present.

It provides observability, dynamic discovery of services, and enables security between service-to-service communication from a code development perspective and reduces complexity with the business logic in the application side.

What are the benefits of adopting Istio for cloud-native applications:

- Separates operations from development

- Abstracts your Application from Infrastructure

- Route traffic flow without changing the Application Codebase.

- Increased agility

- Securing service to service communication

- Language-Independent. (your Microservices can be of any language)

- Observability of your network communication

- Fleet Wise policy enforcement.

- Traffic management

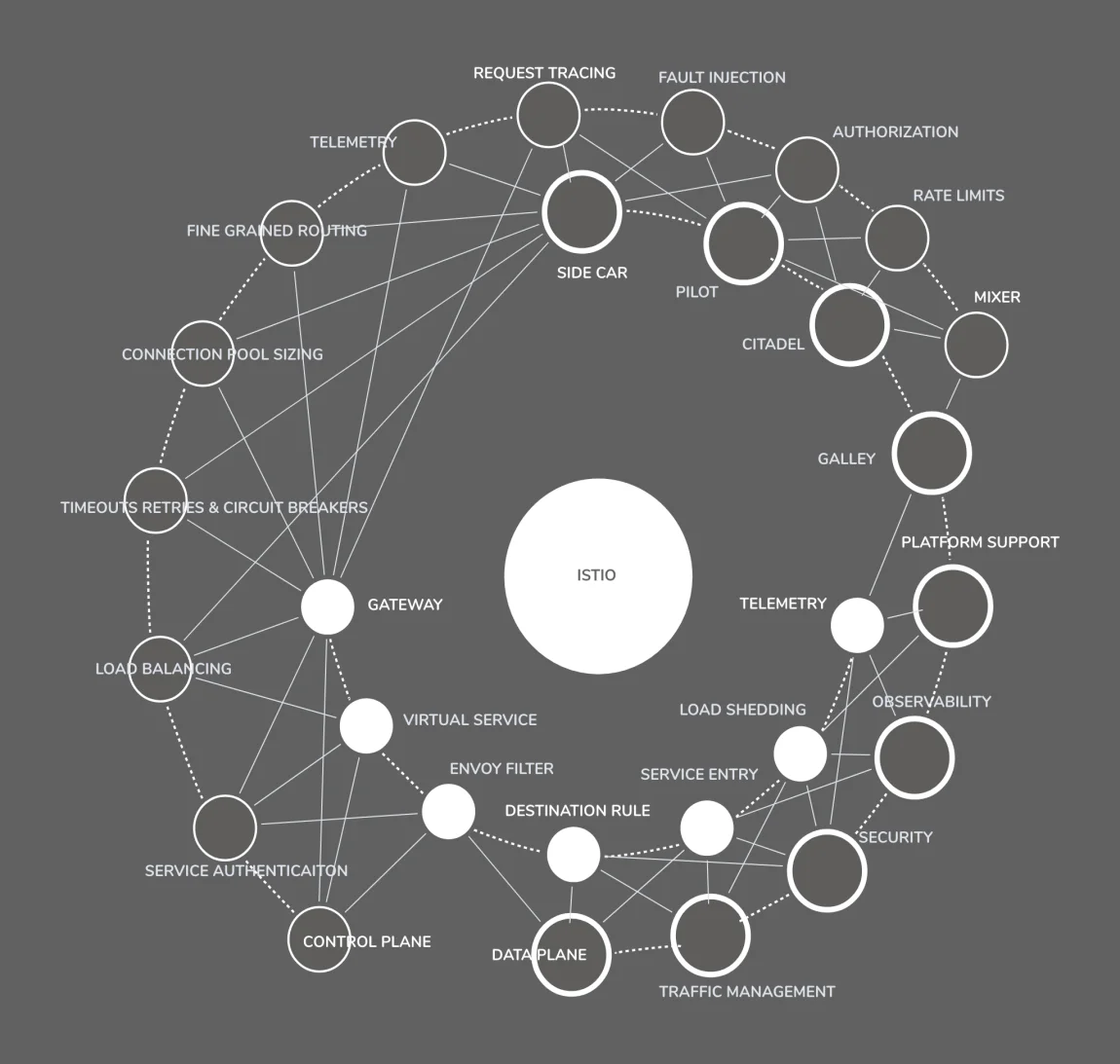

Features of ISTIO

How do you pronounce Istio?

Istio is no doubt conventional pronunciation of a Greek word, “ιστίο”, meaning “sail”. Also, it is a relative term from the Greek word, “κυβερνήτης”, meaning “pilot” and pronounced as Kubernetes.

Who made Istio?

It was started by teams from Google and IBM in partnership with the Envoy team from Lyft. It’s been fully developed in the open on GitHub.<<https://github.com/istio>>.

Takeaways

Service mesh will be mandatory for any organization running monolithic or microservices workloads. Below are some of the popular open-source and commercial service mesh offerings for your reference.

Service Mesh Offerings List

Open-source service mesh offerings list:

Commercial service mesh offerings list: